Now let's look at the interval based on the individual predictions of individual trees (we should expect these to be wider since the random forest does not benefit from the assumptions (which we know to be true for this data) that the linear regression does): library(randomForest)įit2 <- randomForest(y ~ x1 + x2, ntree=1001) We can see there is some uncertainty in the estimated means (confidence interval) and that gives us a prediction interval that is wider (but includes) the 8 to 12 range. (pred.lm.pi <- predict(fit1, newdat, interval='prediction')) (pred.lm.ci <- predict(fit1, newdat, interval='confidence')) Let's look at the intervals from regression: fit1 <- lm(y ~ x1 * x2) Any estimated prediction interval should be wider than this (not having perfect information adds width to compensate) and include this range. This means that the 95% prediction interval based on perfect knowledge for these points would be from 8 to 12 (well actually 8.04 to 11.96, but rounding keeps it simpler). We know from the "true" model that when both predictors are 0 that the mean is 10, we also know that the individual points follow a normal distribution with standard deviation of 1. This particular data follows the assumptions for a linear regression and is fairly straight forward for a random forest fit. We can see some of the issues around prediction intervals by simulating data where we know the exact truth. Other things that would affect the width of the prediction interval are assumptions about equal variance or not, this has to come from the knowledge of the researcher, not the random forest model.Ī prediction interval does not make sense for a categorical outcome (you could do a prediction set rather than an interval, but most of the time it would probably not be very informative). The prediction interval should be wider where the corresponding confidence interval would also be wider. But the prediction interval is completely dependent on the assumptions about how the data is distributed given the predictor variables, CLT and bootstrapping have no effect on that part. The confidence interval is fairy robust due to the Central Limit Theorem and in the case of a random forest, the bootstrapping helps as well. Generally the prediction interval has 2 main pieces that determine its width, a piece representing the uncertainty about the predicted mean (or other parameter) this is the confidence interval part, and a piece representing the variability of the individual observations around that mean. Remember what a prediction interval is, it is an interval or set of values where we predict that future observations will lie.

The scenario mentioned in the top-voted answer will only occur when every leaf of all trees have data points belonging to only one class in them.This is partly a response to Dareddy (since it will not fit in a comment) and partly a response to the original post. right leaf in 2nd child split has 75% yellow so prediction probability of class yellow will be 75%.

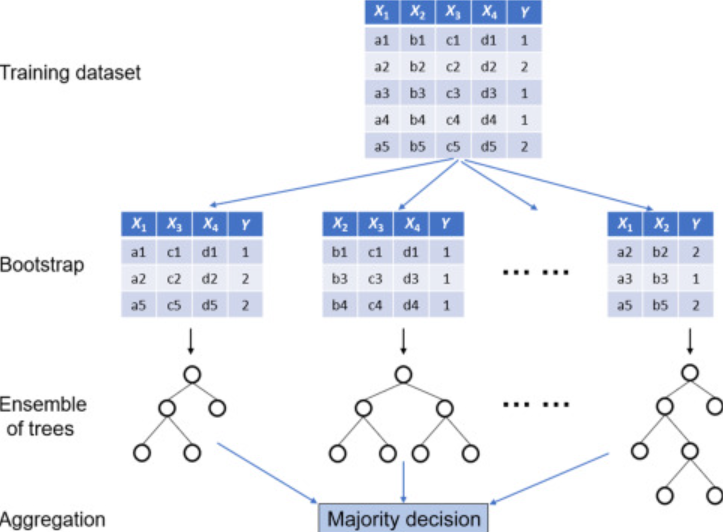

Look at this image of a single decision tree to understand what it means to have different classes within the leaf. A single tree calculates the probability by looking at the distribution of different classes within the leaf. In other words, since Random Forest is a collection of decision trees, it predicts the probability of a new sample by averaging over its trees. The class probability of a single tree is the fraction of samples of the same class in a leaf. I am afraid the top-voted answer isn't correct (at least for the latest sklearn implementation).Īccording to the docs, the probability of prediction is computed as the mean predicted class probabilities of the trees in the forest. Is there any way to get the next 5 digit for example? The first issue is that the results represent the probabilities of the labels without being affected by the size of my data? The second issue is that the results show only one digit which is not very specific in some cases where the 0.701 probability is very different from 0.708. However, I have two main issues with the results about which I am not confident. Where the second column is for class: Spam. Predictions = classifier.predict_proba(Xtest) Instead of having Spam/Not Spam as labels of emails, I wish to have only for example: 0.78 probability a given email is Spam.įor such purpose, I'm using predict_proba() with RandomForestClassifier as following: clf = RandomForestClassifier(n_estimators=10, max_depth=None, Sometimes I need to have the probabilities of labels/classes instead of the labels/classes themselves.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed